It will give you the same result as you were expecting from using serial. One option is to add a new bigint column, fill it with values from the old column and then drop the old column. The second line alters your table with the new default value, which will be determined by the previously created sequence. You have to change all partitions at once, because the column data type of the partitioned table has to be the same as the column data type in the partitions. The START statement defines what value this sequence should start from. In your case the table is address and the column is new_id. OWNED BY statement connects the newly created sequence with the exact column of your table.

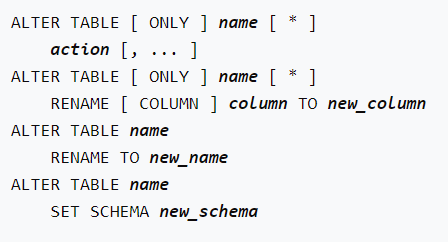

The first line of the query creates your own sequence called my_serial. In case you would like to achieve the same effect, as you are expecting from using serial data type when you are altering existing table you may do this: CREATE SEQUENCE my_serial AS integer START 1 OWNED BY address.new_id ĪLTER TABLE address ALTER COLUMN new_id SET DEFAULT nextval('my_serial') Because serial is not a true data type, but merely an abbreviation or alias for a longer query. If you'll try to ALTER an existing table using this data type you'll get an error. In later versions, this happens only with volatile default values - check if your use case matches any of these two cases.This happened because you may use the serial data type only when you are creating a new table or adding a new column to a table. Note 2: If you happen to use an older Postgres version (up to 10), add the new column without a default, otherwise the whole table will be rewritten. Then change the application code to use the higher precision for amount, and the original one for amount_new. In that case, you'll need three ALTER TABLE statements inside the transaction: amount -> amount_oldĪmount_old -> amount_new (looks confusing first, but the application will be happy) Note 1: as mentioned in comments, there is a chance you cannot swap in the new column and change the application code at the same time. Obviously, test the process in a test environment beforehand. On the other hand, you can revert to the old column until the very end, in case some problems appear. The total time for the change will be (much) more than with a simple data type change. In my experience this is the way you have to plan with the shortest downtime possible. eventually drop the old column, after removing writing to it on the application side.Check beforehand that the application handles the data type change nicely on all read paths. This will normally be a very fast operation. I have multiple columns and want to change all in one query along with their datatype. once all rows are updated, you can do a pair of ALTER TABLE for renaming both columns in the same transaction (the new one to amount, the old one to something else).There is a good chance you want to do a VACUUM ANALYZE after a certain number of rows were updated. Do this gradually, so that the system can still operate normally (probably somewhat slower than usual). copy values from the old column to the new one.change application logic so that it writes into both the old and new columns - this way new rows will have the new column filled with data.add the new column with the desired data type.

We want to explore if there are any other strategies to do this task OR in our current strategies what can we do extra or different to minimise our down time. If in doing so aīIGGER DB instance can help, we are open to explore that as well. NOTE: Our primary goal is to minimise the down time. Create a new partitioned table with exact same configuration, NOTE: we ran this command on the table without dropping any indexes ALTER TABLE table_1 ALTER COLUMN amount type numeric(22, 6) įew of the different strategies that we are exploring:ĭrop all the indexes, triggers and foreign keys while the update runs and recreate them at the end.Ī. Now running an Alter command like below one is taking a lot of time ( hours): So we need to change our type to numeric(22, 6). Now we need to support higher precision upto 6. Initially we created amount column with data type numeric(18, 2). On table_1 (user_id, column_2, column_3, column_5) This table also contains multiple indexes (including partial indexes) create index index_1 Id bigint default nextval('table_1_id_seq'::regclass) not null,

Each partition contains 6 months data except the first partition which contains data for almost 18 months.ĭatabase specs: db.r6g.16xlarge (vCPU:64, memory:512, We have a very big POSTGRES table containing more than 8 BILLION rows and growing at a very high rate ( 30 million rows per day).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed